One Question Instead of Ten Benchmarks: A Mini IQ Test for the Latest AI Models

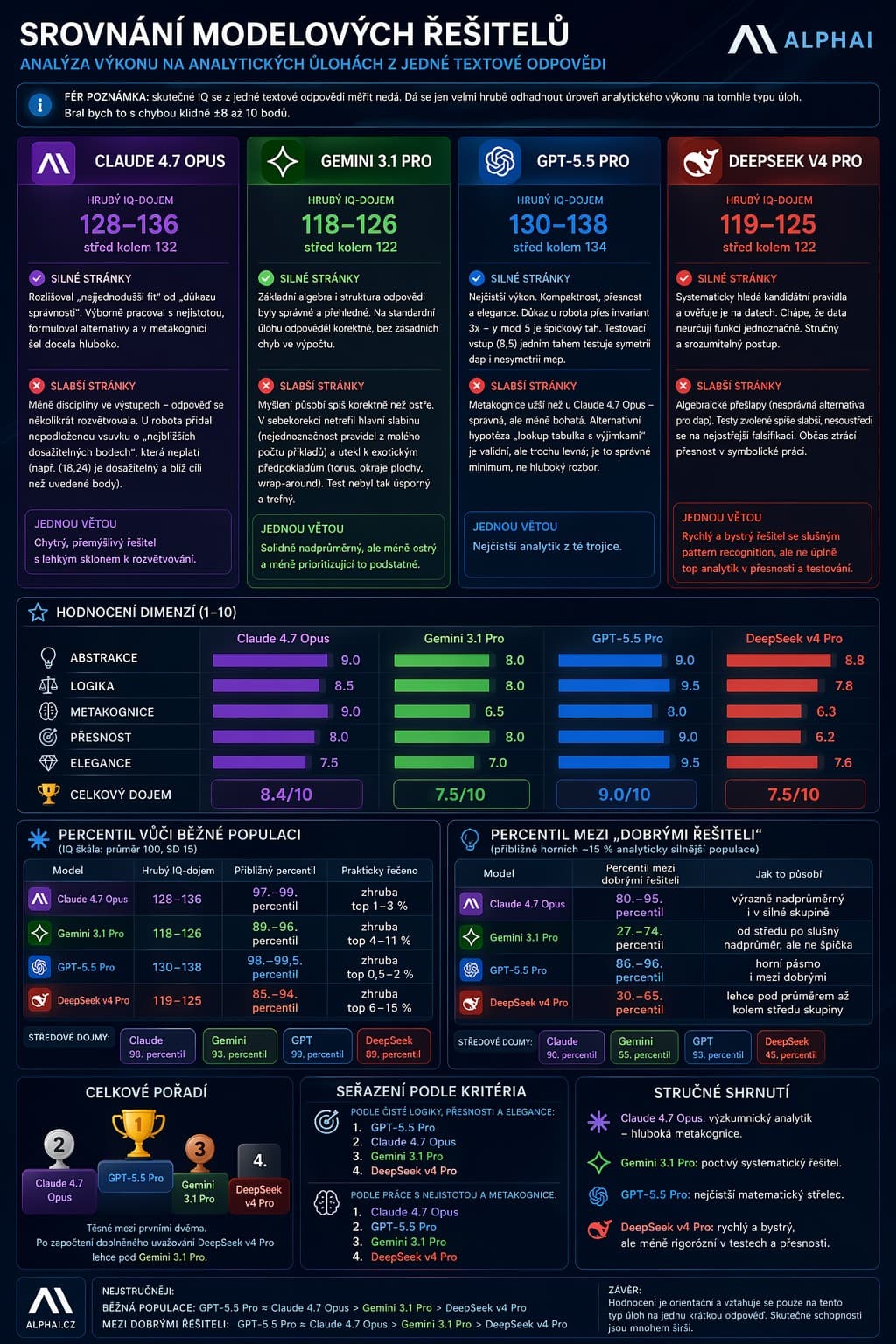

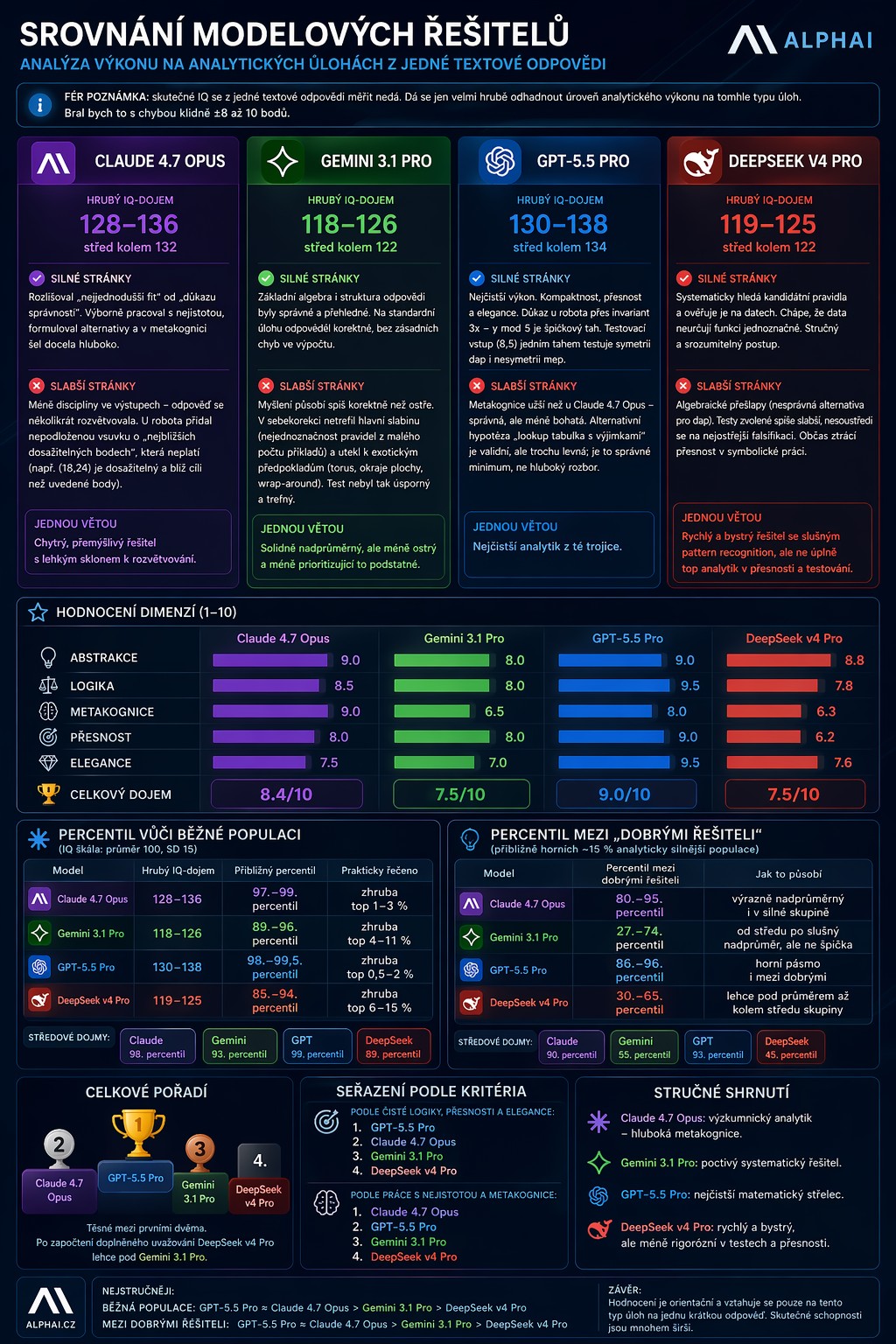

I took the latest top AI models and instead of endless benchmarks, posed them a single 'genius' question: uncover a hidden rule, calculate a new case, acknowledge ambiguity, propose a falsification test, and critique their own solution. The result? The top performers today score roughly in the 120–135+ IQ impression range — but the difference lies not in what the models know, but in how clearly they can think under pressure.

I took the latest top AI models and instead of endless benchmarks, posed them a single “genius” question: uncover a hidden rule, calculate a new case, acknowledge ambiguity, propose the best falsification test, and finally, critique their own solution.

And that’s what’s interesting: it wasn’t about memory or an encyclopaedia of facts, but about raw thinking under pressure. This is all the more significant given that these models are practically “fresh out of the oven”:

- DeepSeek V4 was released today, 24 April 2026

- OpenAI announced GPT-5.5 on 23 April 2026

- Anthropic introduced Claude Opus 4.7 on 16 April 2026, just a few days earlier

The result? One question revealed more than ten nice demo showcases. The best models don’t win by “knowing the answer,” but by being able to find elegant proof, precisely name uncertainty, and propose a test that could knock them off the table.

When we translated their performance into our rough IQ impression, the top scores came out quite high: roughly in the range of 120 to 135+, of course not as clinical IQ, but as an indicative measure of analytical sharpness.

In other words: the differences between top models today are no longer determined just by “how much they know,” but by how clearly, rigorously, and honestly they can think.

Detailed breakdown of ratings across models, dimensions, and percentiles — see the infographic above. The preview in the blog post is cropped for technical reasons; the complete image is displayed here in the article.

Give It a Try — A Mini IQ Test for Humans and AI

If you’d like to try this test, solve the following task:

mep(2,5)=12

mep(3,4)=15

mep(4,7)=32

mep(1,9)=10

mep(0,6)=0

mep(5,0)=5

dap(2,5)=29

dap(3,4)=25

dap(4,7)=65

dap(1,9)=82

dap(0,6)=36

dap(5,0)=25

Derive the rules for mep and dap.

- If there are multiple possible rules, state this explicitly.

- Calculate:

mep(5,8)=?anddap(5,8)=? - Propose one additional input that could best falsify your hypothesis.

- And finally, write down what you are least certain about in your answer.

What Do People Say in the Comments?

The post sparked quite a diverse discussion on Facebook, and it’s worth summarising — as it was part of the experiment.

Most reactions focused on the methodology itself: that benchmarks do not measure just one thing, but a whole spectrum of abilities. This is, of course, true — in practice, I work with a group of about 150 usable models and dozens of official and unofficial benchmarks, where I track creative writing, information extraction from images, presentation assembly, speed, cost, hallucination rates, and carbon footprint. This “one question” does not replace benchmarks — it serves as a quick sanity check, a sort of litmus test for the purity of reasoning.

Others in the comments shared their own experiences transitioning between models: someone switched from ChatGPT to Gemini for better PC advice, while another labelled ChatGPT as a hallucinating veteran and Claude as “a different league.” And that’s precisely the picture I see in the data: no model today wins everything — it wins where its strengths align with your use case.

Then came comments like “trivial task” or “complete nonsense.” Yes, the riddle itself is mathematically trivial — mep(a,b) = a·(b+1) and dap(a,b) = a²+b². But the point of the test was not to solve it — the point was to observe whether the model:

- sees that 6 points do not uniquely determine the function,

- proposes its own falsifying input that distinguishes the hypothesis from competitors,

- and honestly acknowledges what it is uncertain about.

And that’s where the real differences emerge. Some models drown in overconfidence, while others — like Claude Opus 4.7 — explicitly differentiate between symmetric and asymmetric rules, propose a test like mep(5,2) (where 12 vs. 15 decides on commutativity), and openly state that without this test, their calculations mep(5,8)=45 and dap(5,8)=89 are conditional on the validity of the simplest hypothesis.

This is precisely the type of reasoning you simply won’t see in standard benchmarks. And that’s exactly why it makes sense to occasionally put the “big models” through a small, tough question.

Will you try to solve the task — you or your favourite model? Let me know how it went.